Member-only story

The physics of single-slit diffraction

Diffraction is a physical phenomenon in which a wave (could be an acoustic wave, water wave, electromagnetic wave, etc) bends around the edges of some kind of obstacle. When we look at the passage of light through a narrow slit for example, we note how most of the light in the original beam is blocked, and the projected pattern on a back-screen resembles the shape of the aperture through which the light beam travels. However, if the aperture is smaller than the wavelength of the incoming light, an interesting phenomenon arises: the light interferes with itself, forming a diffraction pattern. In this article, we will look at the physics and mathematics of diffraction through a narrow slit.

Single-slit diffraction in 1D

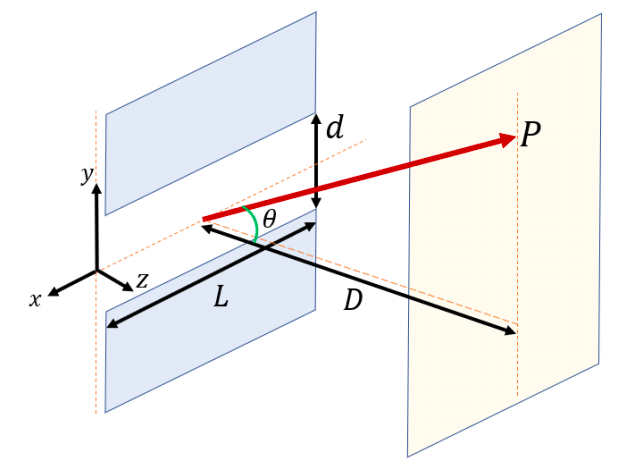

One and two-dimensional diffraction patterns can be formed by blocking a source of light with a screen possessing a small aperture of arbitrary shape, and allowing a small portion of that light to be projected on a screen, as shown in Figure 1. In most practical cases, spherical wavefronts from a source can be considered as planar as long as the distance between the projecting screen and the source is considerably larger than the largest feature on the aperture or obstacle. In order to predict how these diffraction patterns will look, we can analyse aperture interference patterns based on Huygen’s principle, and applying suitable approximations depending on the scale of the problem.

The simplest possible case we can look at is the single-slit with width d and length L, as shown in Figure 2. We will consider the case in which each point on the slit acts as its own source of spherical waves. Since in practical situations the distance between the aperture and the screen is much larger than the aperture dimensions (D >> d), we can assume planar wave fronts will hit the screen. Furthermore, if we take two consecutive sources separated by a distance y as shown in Figure 3, then we can define the phase difference as